Page 25 - ITU Journal, ICT Discoveries, Volume 3, No. 1, June 2020 Special issue: The future of video and immersive media

P. 25

ITU Journal: ICT Discoveries, Vol. 3(1), June 2020

The stream packager takes the encoded videos and occupancy map that indicates parts of the canvas

converts the memory bits into consumable that shall be used (i.e., occupied regions within the

bitstreams. The content distribution network (CDN) patches packed in the canvas).

enables the distribution component of the pipeline Also, patch information metadata is generated to

to stream the content to client players.

indicate how patches are mapped between the

On the client side, the stream de-packager takes and projection planes and the canvas. Afterwards,

reads from the consumable bitstreams that feed the existing video encoders such as AVC and HEVC are

decoder. The client player’s video decoder then used to exploit the spatial and temporal redundancy

decompresses the video bitstream. Finally, the of geometry and texture components of the canvas.

video renderer takes decoded video sequences and The video encoded streams are combined with the

renders them based on user preference, into what is video encoded occupancy maps and the encoded

seen on the device. patch information metadata into a single bitstream.

The encoding process in V-PCC compression is

3. MPEG IMMERSIVE MEDIA STANDARDS summarized in Fig. 4.

MPEG is developing immersive codecs to compress Decoding Decoded

and stream volumetric content. The emerging Layer Point Cloud

MPEG immersive media standards support point Decoded

Texture

clouds and related data types, as well as immersive

video formats. A brief description of the immersive Video Decoding

codecs is given here to establish the technical Decoded

Geometry

background for the object-based implementation Texture Reconstruction

described in a later section.

3.1 Video-based point-cloud coding (V-PCC) Patch Info Decoding Decoded Geometry Reconstruction Smoothing

Patch

Bitstream

Encoding Layer

Point Cloud Geometry

Geometry Generation Image Padding Padded Geometry Decoded

Images

& Texture Images

Occupancy

Texture Reconstruction Video Encode Occupancy Map Decoding Occupancy Map

Images

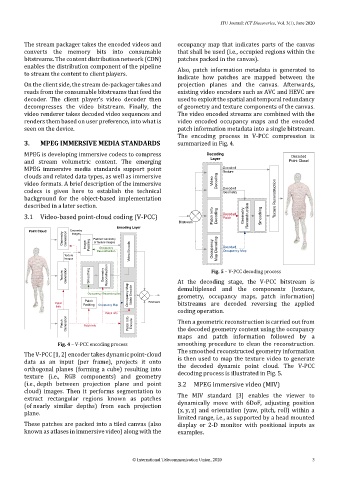

Fig. 5 – V-PCC decoding process

Texture Generation Smoothing Geometry Reconstruction At the decoding stage, the V-PCC bitstream is

Occupancy Reconstruction Occupancy Map Video Encode demultiplexed and the components (texture,

geometry, occupancy maps, patch information)

Patch Bitstream

Patch Packing Occupancy Map bitstreams are decoded reversing the applied

Info

coding operation.

Patch Info

Patch Generation Patch Info Patch Info Encode Then a geometric reconstruction is carried out from

the decoded geometry content using the occupancy

maps and patch information followed by a

Fig. 4 – V-PCC encoding process smoothing procedure to clean the reconstruction.

The smoothed reconstructed geometry information

The V-PCC [1, 2] encoder takes dynamic point-cloud

data as an input (per frame), projects it onto is then used to map the texture video to generate

orthogonal planes (forming a cube) resulting into the decoded dynamic point cloud. The V-PCC

texture (i.e., RGB components) and geometry decoding process is illustrated in Fig. 5.

(i.e., depth between projection plane and point 3.2 MPEG immersive video (MIV)

cloud) images. Then it performs segmentation to

extract rectangular regions known as patches The MIV standard [3] enables the viewer to

(of nearly similar depths) from each projection dynamically move with 6DoF, adjusting position

plane. (x, y, z) and orientation (yaw, pitch, roll) within a

limited range, i.e., as supported by a head mounted

These patches are packed into a tiled canvas (also display or 2-D monitor with positional inputs as

known as atlases in immersive video) along with the examples.

© International Telecommunication Union, 2020 3